February 27, 2023 | Digital Quality Transformation, Year Round Prospective HEDIS®

Why NLP Should Be Part of the Digital Quality Transformation

This month’s blog talks about the importance of unstructured data to achieving digital quality. Unstructured data is clinically valuable and likely here to stay, and NLP can help us embrace it to improve quality.

Rebecca Jacobson, MD, MS, FACMI

Co-Founder, CEO, and President

The journey to digital quality is first and foremost a change in the data used to calculate and measure compliance – from predominantly claims data to predominantly clinical data. I chose those last seven words carefully because health insurers have been using many types of clinical data (including laboratory and pharmacy data) in quality measurement for some time now. And they have been using medical record review, albeit on a sample and retrospectively, for even longer.

However, the data sources that can now be used for Electronic Clinical Data Systems (ECDS) HEDIS measures have expanded to include electronic health record (EHR) data, as well as care management system data, making it possible to use comprehensive clinical data, at scale. Health Information Exchanges (HIEs) and other DAV-certified data integrators can now produce standard CCD files that enable health plans to close care gaps faster and more comprehensively. As the interoperability landscape evolves, we expect that Fast Healthcare Interoperability Resources (FHIR) will replace CCDs over time as the main format for transmitting structured data from providers to payers.

But that doesn’t necessarily mean that we will capture all important clinical data for quality measurement, and it’s important to know why.

Structured vs. Unstructured Data

Clinical data is captured in many ways at the point of care. In a perfect world, providers using an electronic health record (now ~88% of US providers) fully encode their encounters as structured data. In other words, they use drop-downs and other interface elements to partially or fully document patient care.

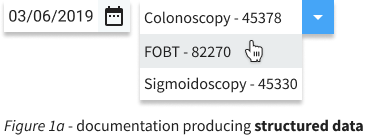

An example in Figure 1a shows the selection of a procedure and date that closes the HEDIS® COL measure. When this documentation practice is used, the resulting data is available as structured data, transmissible in a CCD or FHIR format, and can be easily used to determine and measure compliance.

The challenge arises when providers do not, or cannot, fully encode every encounter as structured data using the appropriate EHR interface element. And this happens to be quite common. What do providers do that produces such unstructured data? Providers may prefer to document patient care using

1. Text boxes that are provided by the EHR allowing free-text entry of additional data

2. EHR-templated clinical notes that may not have the ability to bring in and store data in structured form, or

3. Dictation or typing of clinical notes, reports, or letters.

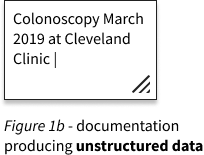

Figure 1b above shows an example of a procedure and date that closes the HEDIS COL measure, documented in a free-entry text box. These documentation practices are often used by providers as time savers, in a world where provider burnout is becoming ubiquitous. This variation in provider use of EHRs is part of why we often see mixed results with CCDs as a source of HEDIS evidence. In many cases, they capture quality data quite well. But in other cases, the CCD is incomplete.

Where it Comes From and Why it Matters

There are only a few recent, quantitative studies that investigate the extent and causes of such documentation variation. Most illuminating is this excellent article by Cohen et al, which looked at EHR data from 809 physicians in 237 practices across 27 states. The authors found that certain clinical documentation categories had high variation among providers within the same practice. These included (1) assessment and diagnosis, (2) problem lists, (3) social history, (4) review of systems, and (5) review and discussion of documents (see the appendix of the paper for definitions). Based on a separate but aligned qualitative analysis, they surmised that the cause of the variation was frequently due to provider preference for unstructured documentation. Readers who are clinically trained will notice immediately why the first three categories of high variation items could be particularly relevant to healthcare quality measurement. Mind you that the variation being studied here was within practice, by design!

There are also inherent differences in the implementation of the CCD/A by EMRs to meet the requirements of Meaningful Use 2 that produce further variation – including the use of optional sections that may not be complete in the transmitted file. Thus, even when providers diligently use their EMRs to fully encode patient care into structured data, the resulting CCD may or may not fully capture and transmit that data.

That leads me to my contention that structured data (CCDs and/or FHIR) alone will not get us all the way there on Digital Quality transformation. In fact, I am particularly worried that structured data alone could produce troubling blind spots in quality measurement especially among populations where providers (1) are more peripheral to the academic centers, (2) have higher patient volumes and less time, (3) get less EHR training, (4) use less sophisticated EHRs, and/or (5) are less sophisticated in how they use their EHRs. And sadly, I suspect that this combination of characteristics will be most prevalent among rural and Medicaid populations – the same populations that are often left behind.

There are other challenges with purely structured data too. For example, we know that behavioral health data is less frequently captured in structured form. We also know that symptoms, signs, and severity indicators are less frequently captured in structured form. This type of data is often important for assessing outcomes. Furthermore, data needed to make measures more patient-centric (such as family history) may also be more frequently unstructured. And finally, at least for now, when providers change EHR systems the legacy data from their previous system is almost always loaded as unstructured clinical notes. Of course, we expect that to change with interoperability improvements, but that could take a while. In short, it is unlikely that structured data alone will be sufficient to get us to the learning health system that we envision.

Embracing Unstructured Data

So that brings me to the solution, which I believe is to stop ignoring provider documentation preferences, and instead, embrace them. Natural Language Processing (NLP) technologies have become sufficient to capture quality evidence from unstructured data sources for many measure programs at an accuracy that is close to or equivalent to human abstraction. These systems are available from vendors and are also developed in-house by health plans. Health plans are already implementing centralized platforms for better managing unstructured data. As the hybrid methodology is retired, we can expect NLP to be used even more to reduce the labor cost of medical record review across populations. We think health plans will increasingly need NLP systems to help them assess the compliance of individual members and more smartly determine which gaps to prioritize and how to close them.

What are some important first steps towards using unstructured data to close care gaps across populations, analogous to what is happening now with CCDs and eventually with FHIR? In next month’s blog, I will be looking at how health plans and other risk-bearing entities can plan for the use of unstructured data as a core data asset, helping them move to digital quality and prospective, patient-centered, quality measurement and improvement.